This is the fifth part of the blog series which introduces the Azure Kubernetes Service (AKS).

Overview on the series:

- AKS Part 1 – general terms and availability options

- AKS Part 2 – network, storage and tools

- AKS Part 3 – security topics

- AKS Part 4 – scaling and monitoring

- AKS Part 5 – advanced integration with other services

- AKS Part 6 – cluster architecture, hints & tricks and hands-on

In this part, the topic is:

- Advanced integration with other (Azure) services

In addition to the automatic creation of storage resources or the NSG configuration, there are numerous other integrations to Azure services and resources, some of which will be briefly discussed here.

Usage of Azure Application Gateway as Ingress

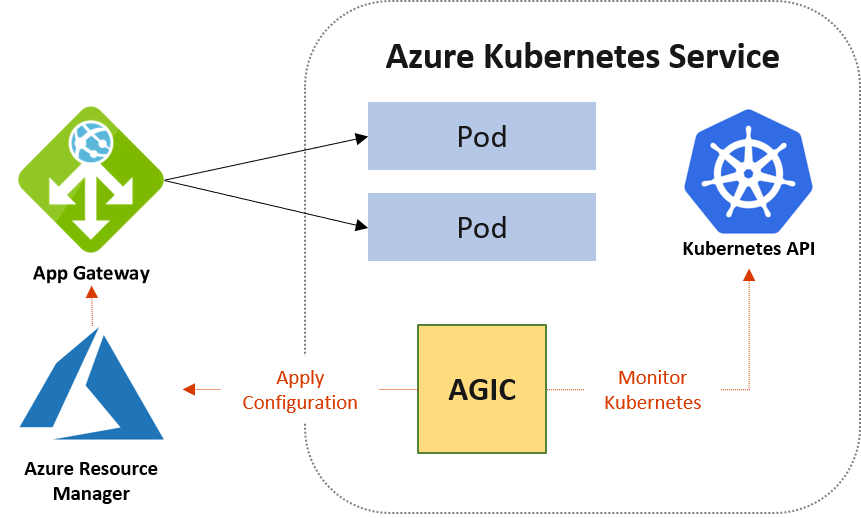

Ingress controllers are often used in a Kubernetes cluster to control traffic more granularly and implement certain rules. A common implementation is NGINX[1]. However, this leaves the resource load for the ingress controller in the cluster itself. An alternative is to use the Azure Application Gateway as the ingress controller.

The Azure Application Gateway is a Layer 7 load balancer with the option of an integrated firewall. The application gateway can also offer features such as TLS termination or URL path routing. With the Application Gateway Ingress Controller (AGIC) add-on enabled, it can be used as an ingress resource in the cluster, reduces the load in the cluster itself and can scale independently. Since the application gateway communicates directly with the pods via private IPs, it does not require node ports or KubeProxy services and thus takes additional load off the cluster. However, a CNI network is a prerequisite. Activating the add-on then creates the necessary Kubernetes Ingress resources, services and pods.[2]

With the following CLI command, the add-on can be activated in an existing cluster and the cluster is linked to an existing application gateway:

az aks enable-addons -n $aksClusterName -g $rgName -a ingress-appgw --appgw-id $appgwId

Azure Functions with KEDA

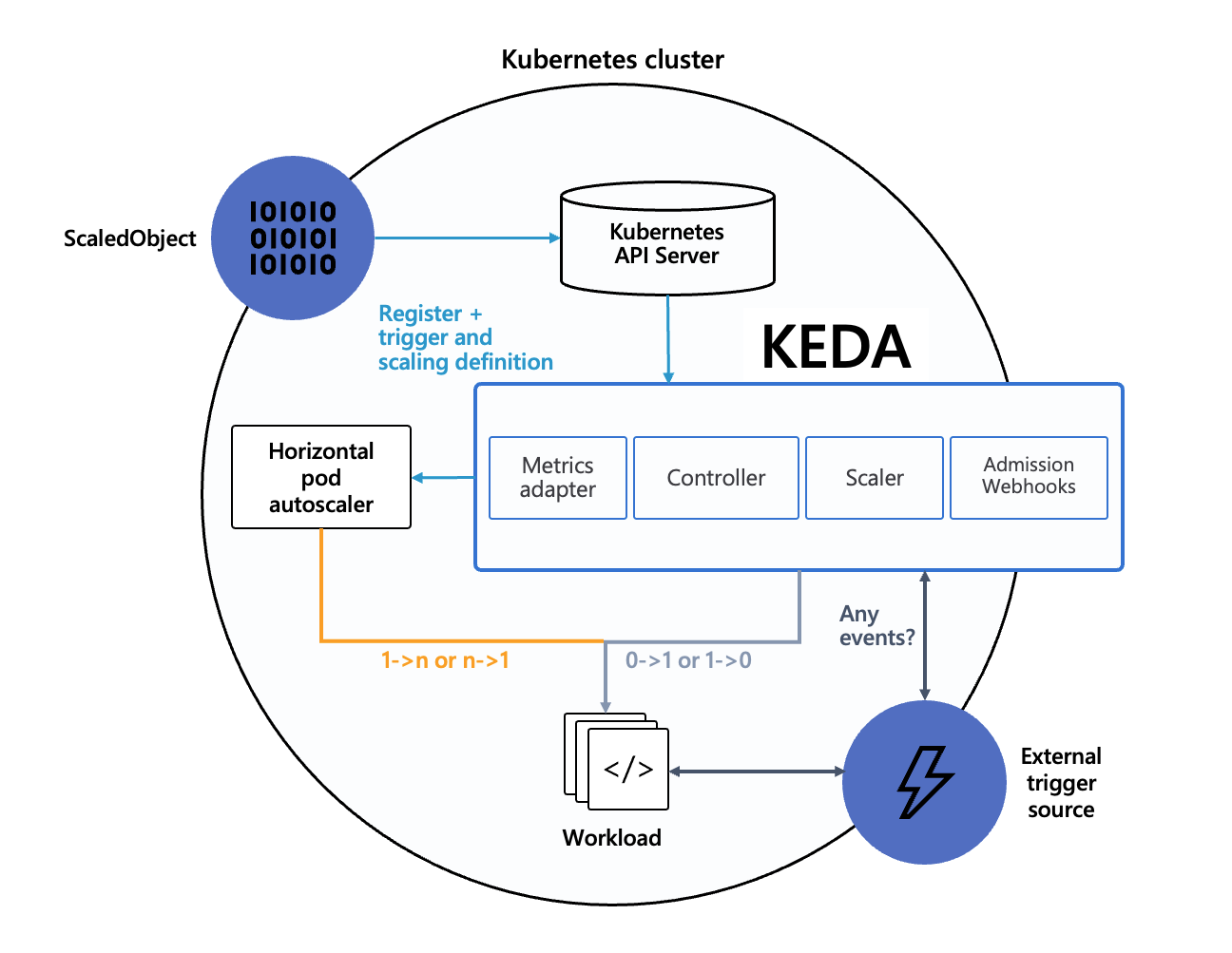

The KEDA framework has already been discussed in the Monitoring section. KEDA enables Azure Functions to be executed directly in the AKS cluster in a container. The Azure Function App must be created in container mode so that the runtime is available. After KEDA has been installed in the cluster, the function app can be loaded into the cluster, e.g. via the Function Core Tools. This process creates a manifest with deployment, ScaledObject and secrets for the environment variables from the local.settings.json file.

Again, KEDA works with the HPA to scale the function container based on events such as new messages in an Azure storage or service bus queue, using cron jobs or other triggers, as long as there is work to be done.[3]

Azure Active Directory (AAD) Pod Identity

Provided that AAD integration is available for the cluster and a CNI network has been selected, AAD Pod Identity can be used for Azure resource access. It is not recommended for Kubenet due to security aspects. If the add-on is activated, again additional pods run as Deamon Sets on the nodes for the functionality. Using this approach, an identity of a pod can be linked to an identity from Azure, and the pod gets the necessary access to a cloud resource. This feature is currently only available on Linux nodes and is still in preview status[4].

Continuous Integration & Deployment

To round off the picture, the possible link to CI-CD pipelines, for example from Azure DevOps or GitHub Actions, should be mentioned here once again. A code commit, for example, triggers the automatic creation of new images that are loaded into the container repositories and make an updated version of the application available in the AKS cluster through a Helm upgrade step. Helm is a package manager for Kubernetes resources and is integrated into AKS.[5]

[1] https://www.nginx.com/

[2] https://azure.github.io/application-gateway-kubernetes-ingress/

[3] https://microsoft.github.io/AzureTipsAndTricks/blog/tip277.html

[4] https://docs.microsoft.com/en-us/azure/aks/use-azure-ad-pod-identity

[5] https://docs.microsoft.com/en-us/azure/aks/kubernetes-helm