This is the second part of the blog series which introduces the Azure Kubernetes Service (AKS).

Overview on the series:

- AKS Part 1 – general terms and availability options

- AKS Part 2 – network, storage and tools

- AKS Part 3 – security topics

- AKS Part 4 – scaling and monitoring

- AKS Part 5 – advanced integration with other services

- AKS Part 6 – cluster architecture, hints & tricks and hands-on

In this part, topics are

- network options

- storage and

- available tools.

Network

In AKS, there are two different network types available for the clusters, kubenet and Container Networking Interface (CNI).

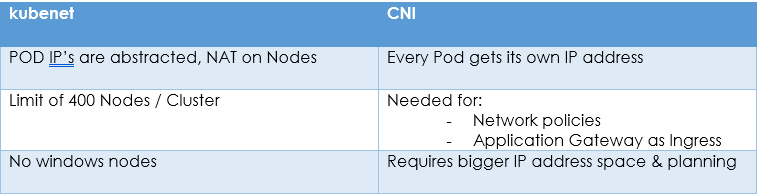

The kubenet variant is the simpler approach. The IP addresses of the pods are abstracted here and do not have to be considered in the address pool of the virtual network. They are addressed through Network Address Translation (NAT) on the nodes. With this approach, however, no Windows node pools are possible. With the CNI approach, each pod is assigned an IP address from the IP pool of the VNet, which therefore requires more elaborate planning when setting up the cluster. This approach must be chosen if the AKS Network Policies are to be used or an Azure Application Gateway is desired as an ingress resource.

Table 1 lists some other differences.

It should be noted that the network type cannot be changed after the cluster has been created.[1]

„Magic“ with kubectl

The tool kubectl is used for interactions with the AKS cluster. The fact that a certain magic takes place here, in that Azure resources are also created and configured at the same time through K8’s commands, is due to additional pods on the master nodes. For example, these are CSI drivers for universal storage management or pods from the cloud-provider-azure project. The latter help to perform the additional Azure load balancer and network security group configuration when an external service is created as a Kubernetes resource.[2]

Storage

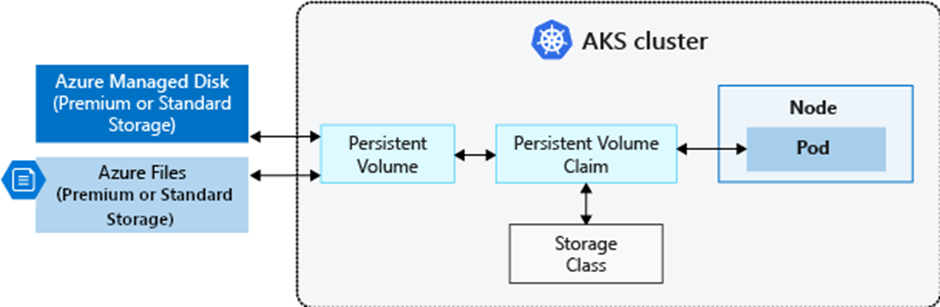

AKS can also support storage management. If local storage on the nodes themselves is not sufficient, for example because persistence is needed beyond the pod lifecycle, Azure discs or fileshares can be connected. The Kubernetes resource Persistent Volume Claim (PVC) must be created and the volume mount specified during deployment. Depending on the storage class used in the PVC, an Azure Disc or an Azure Fileshare is then automatically created. Some storage classes are built-in, but they can also be created individually. In the background – in the recommended, more modern approach – the Azure Disk Container Storage Interface (CSI) driver is used. This works according to the CSI specification[3] and runs as a plug-in in the cluster. It is now also possible to create or adapt storage types without changing the Kubernetes Core. The storage classes are also used to control the deletion behaviour for Azure resources when the volume is no longer integrated into pods.[4][5]

Tools

Several tools are available for working with an AKS cluster. The Azure Portal should certainly be mentioned here, in which the GUI is available and the Cloud Shell can also be used for console commands. The cluster itself can be managed via the GUI, which also offers options for cluster monitoring. In the Azure Cloud Shell, kubectl is already installed. It is also convenient to work with Visual Studio Code, which supports it with corresponding extensions. However, kubectl must be installed separately here.

Another interesting option for cluster management is Octant, an open source project with a web-based interface [6] or K9s, a terminal based UI to interact with a Kubernetes cluster.[7]

[1] https://docs.microsoft.com/en-us/azure/aks/concepts-network

[2] https://github.com/kubernetes-sigs/cloud-provider-azure

[3] https://github.com/container-storage-interface/spec/blob/master/spec.md

[4] https://docs.microsoft.com/en-us/azure/aks/azure-disk-csi

[5] https://docs.microsoft.com/en-us/azure/aks/concepts-storage

[7] https://k9scli.io/